In early 2026, the AI landscape reached a crossroads. On one side, we have the “reasoning giants”: GPT-5.4 and Gemini 3.1 Pro. These models offer unprecedented cognitive abilities, but they come with a “Data Tax” that many German firms are no longer willing to pay.

On the other side, a revolution in Small Language Models (SLMs) and open-weight powerhouses like Llama 4 and Mistral Small 4 has arrived. For the German Mittelstand, the choice is becoming clear: Data sovereignty isn’t just a legal checkbox it is a competitive moat.

1. The Sovereignty Dilemma: The Cloud’s Hidden Walls

For years, the trade-off was simple: use a hosted API for the best performance, or run a mediocre model locally. In 2026, that trade-off has evaporated. High-performance AI is no longer a cloud-only privilege, and the risks of “External Intelligence” have become too high to ignore.

The “Thinking” Black Box vs. Auditability

Modern models like GPT-5.4 utilize complex “Chain of Thought” (CoT) reasoning to solve problems. However, for the enterprise user, these internal cognitive steps are a “Black Box.”

- The Compliance Gap: When an AI processes sensitive personnel data or financial forecasts, German compliance officers must be able to audit the decision-making logic.

- The Hidden Risk: With hosted LLMs, you see the output, but you cannot see the reasoning path. Local models allow you to log and inspect every hidden token of the “thought process,” ensuring that AI-driven automation remains transparent and legally defensible.

Geopolitical Resilience and “AI Nationalism”

We have entered an era of “AI Nationalism.” Relying on a US-based or non-EU backbone for your internal ERP automation or R&D workflows is now recognized as a critical supply-chain vulnerability.

- The Digital Heartbeat: If a foreign provider changes their Terms of Service, implements sudden geo-blocking, or suffers a trans-Atlantic undersea cable disruption, the digital heart of your company stops beating.

- Supply Chain Independence: By running Llama 4 or Mistral on-premise, German firms ensure that their core business logic remains functional even if the global political climate shifts. Sovereignty means that your automation is not a rented service it is a permanent company asset.

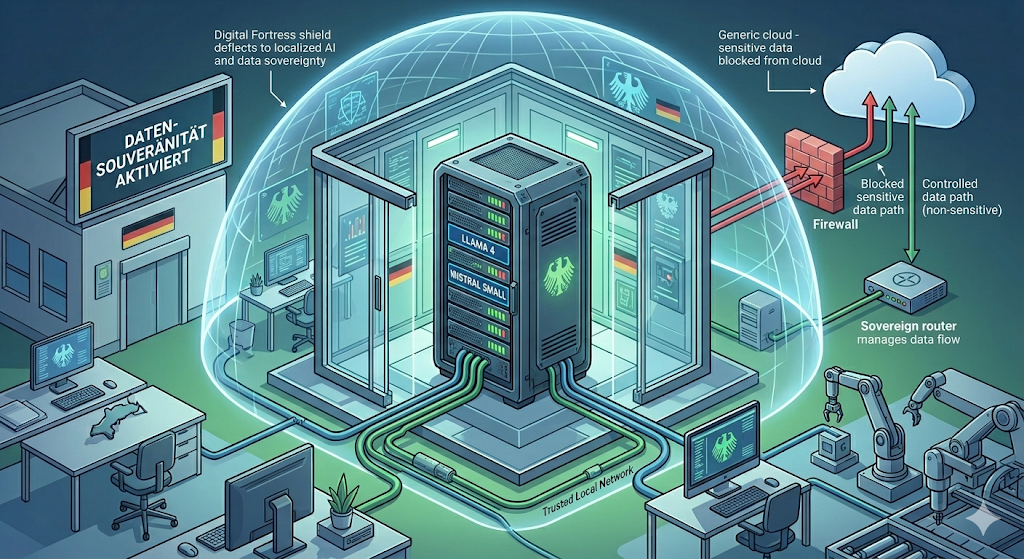

2. Technical Architecture: The 2026 “Sovereign Stack”

The modern stack for a German enterprise is modular, built on trusted database standards and high-efficiency local hardware.

The Memory Engine: PostgreSQL & pgvector

To make an LLM “smart” about your company, you need a Vector Database. While specialized tools like Qdrant (Berlin-based) offer peak performance, many firms are opting for the Pragmatist’s Choice: PostgreSQL with pgvector.

By enabling the vector extension, you can store your intellectual property alongside your transactional data.

-- Initializing the Sovereign Brain in Postgres

CREATE EXTENSION IF NOT EXISTS vector;

CREATE TABLE company_knowledge (

id SERIAL PRIMARY KEY,

content TEXT NOT NULL, -- The document chunk

metadata JSONB, -- Source info (Dept, Date, File)

embedding vector(4096) -- Llama 4/Mistral vector space

);

-- HNSW Index for sub-millisecond local retrieval

CREATE INDEX ON company_knowledge

USING hnsw (embedding vector_cosine_ops);

The Hybrid Shield: NemoClaw vs. Open-Source Routers

The most significant addition to the 2026 stack is the Privacy Router.

- NVIDIA NemoClaw: A high-performance gateway that automatically identifies sensitive PII (Personally Identifiable Information) and reroutes those queries to local GPUs.

- The Sovereign Router (Open Source): A custom middleware that acts as a “Data Air-Lock,” scanning incoming prompts for German keywords (e.g., Personalakte, Geheim).

3. Implementation: The Sovereign Router in Action

Below is a conceptual example of how a German firm implements this routing logic using PostgreSQL and Llama 4.

import psycopg2

import requests

import openai # Used only for non-sensitive "Overflow"

from loguru import logger

def sovereign_ai_router(user_prompt):

# 1. Privacy Check (The 'Air-Lock')

sensitive_terms = ["quartalszahlen", "entwicklungsplan", "geheim", "intern"]

is_sensitive = any(term in user_prompt.lower() for term in sensitive_terms)

if is_sensitive:

# 2. LOCAL ROUTE: QBeyond security, there is a hard financial case. For a typical firm with 500 employees, the "Token Tax" for high-tier hosted models can exceed €15,000 per month.uery the internal Postgres (pgvector) DB

logger.info("Routing to Local Llama 4 (Sovereign Mode)...")

context = query_local_postgres(user_prompt)

# Inference via local Ollama instance

response = requests.post("http://local-ai-server:11434/api/generate",

json={"model": "llama4:8b", "prompt": f"Context: {context}\n\nQuery: {user_prompt}"})

return response.json()['response']

else:

# 3. CLOUD ROUTE: Generic queries can use GPT-5.4

logger.info("Routing to GPT-5.4 (Performance Mode)...")

client = openai.OpenAI(api_key="sk-...")

return client.chat.completions.create(model="gpt-5.4", messages=[{"role": "user", "content": user_prompt}])

4. The Economic Logic: Saving the “Token Tax”

Beyond security, there is a hard financial case. For a typical firm with 500 employees, the “Token Tax” for high-tier hosted models can exceed €15,000 per month.

An on-premise server cluster powered by NVIDIA RTX 5090s or a Mac M5 Max pays for itself in less than a year. Furthermore, by using Quantization (running models at 4-bit or 8-bit precision), these models run on significantly less electricity, aligning with the “Green AI” goals of the European Green Deal.

5. Conclusion: Owning the Digital Brain

The future of German industry relies on automation. But for that automation to be sustainable, it must be owned. By combining the reliability of PostgreSQL, the intelligence of Llama 4, and a robust Privacy Router, German firms ensure their intellectual property stays within their walls.

Moving to an on-premise model is the only way to effectively mitigate the Sovereignty Risk: the danger of having your company’s cognitive workflows managed by a third party with misaligned interests. In an era of increasing geopolitical volatility, localized AI is a shield against “API Diplomacy” and external service disruptions.

In 2026, the ultimate luxury in tech is disconnection. The most innovative firms aren’t just using AI, they own their AI.